All of our demos in most thumbed-up first order here at the wonderful pouet.net

WE ARE

deepr - founder, coder, listener, speaker, tool creator, solver, enthusiast, fusion unit, idea bank, creative mind, visioner and leader of the group

miStral - the northern spirit, graphics artist, fractal wizard, active discusser, passionate photographer, inspirer and polisher of adapt demos

basscadet - musician, graphics artist, tool developer, typography expert, professional photographer and a human of reason

felor - 3d graphics artist by heart, but also inspirer especially to develop better tools to use in the adapt demomakings

legend - musician, designer, artist, project focuser, demo maker and mr KISS reminder

humanoid - musician, inspirer and a promising demo tool developer

minomus - musician, chatter and clarifier when needed

ADAPT DEMOS AND TECHNOLOGY OVERVIEW

All of the adapt demos are real-time graphical presentations combined to music. They are using different custom made demo engines equipped with OpenGL or DirectX rendering pipeline.

The music is played either with self made synthesizer or by playing a music file with FMod sound system libraries.

All shader codes are self created in our adapt coding laboratory.

These are then stirred and mixed up to become demo reality by carefully combining the effects together with a set of self developed and open source tools.

As a technical insight, these demos are made for example by exposing adjustable parameter of the "camera", "mesh", "dof" or "glow" effects with a piece of

script code to a tool. This tool is then providing a GUI to adjust the parameters, make presets, put the things into timeline and synchronize the visuals with the music.

To enable making of these kind of demos easy and fun we constantly search for better ways to improve the toolset, the technical stack and the pool of effects.

Some of the demos have even custom self made scripting languages in use, but the most recent demos rely on QML based JavaScript scriptings and tooling implemented with QML as well.

The 2011 to 2019 era adapt demos use improved version of the GNU Rocket "effect parameter tracker" tool for controlling the parameters of the visual effects on a timeline.

These all adjustments happen real time, enabling tweaking the parameters quickly with the instant visual feedback.

All of our newer demos made from 2020 and on rely on adapt studio time-liner tool. This is something that we still develop and use only as an internal tool, but which may some day become publicly available as well :).

For more details about the tech and tools please see more details here from the end of this page

SOME BEST OF ADAPT PRODUCTIONS

Assembly - event

ember dream - demo

2020 - year

1st - rank

A lot of particle effects that look like 3D but are actually 2D. These are flowing over more traditional 3D scenes with persistent theme of different ancient masks.

A lot of particle effects that look like 3D but are actually 2D. These are flowing over more traditional 3D scenes with persistent theme of different ancient masks.

Our first big demo made with a new time-line effect sequencer tool which is implemented with QML and communicates during the creation phase with the demo engine over WebSocket.

Assembly - event

chroma space - demo

2019 - year

1st - rank

Millions of particles with 3D volumetric shadows and a special screen space chromatic ripple effect, plus technique of propagating waves on a surface of any 3D model - just to mention couple of highlights of this Chroma Space demo.

Millions of particles with 3D volumetric shadows and a special screen space chromatic ripple effect, plus technique of propagating waves on a surface of any 3D model - just to mention couple of highlights of this Chroma Space demo.

Revision - event

surfaced - demo

2019 - year

4th - rank

Our best achievement so far when counting by ranking in a demo competition outside of Finland. Here the volumetric clouds come with shadows, terrains are generated and a lot of particles are emitted in the rhythm of the catchy music track of this demonstration.

Our best achievement so far when counting by ranking in a demo competition outside of Finland. Here the volumetric clouds come with shadows, terrains are generated and a lot of particles are emitted in the rhythm of the catchy music track of this demonstration.

Assembly - event

out of the box - 64kb intro

2018 - year

1st - rank

A long on off in the making since about 2010 64kb sized executable demonstration - and due to been started already back in 2010 used DirectX9 and HLSL for rendering. Specialty of this are a lot of particles and all mesh objects generated with one piece of vertex shader code.

A long on off in the making since about 2010 64kb sized executable demonstration - and due to been started already back in 2010 used DirectX9 and HLSL for rendering. Specialty of this are a lot of particles and all mesh objects generated with one piece of vertex shader code.

Simulaatio - event

red smoke - demo

2018 - year

1st - rank

Red smoke demo is actually about a person riding a horse on the desert and escaping the inevitable, ending up in a limbo of mesh instancer breakers and to massively cloud of particles forming up the black tiger imagery in the end.

Red smoke demo is actually about a person riding a horse on the desert and escaping the inevitable, ending up in a limbo of mesh instancer breakers and to massively cloud of particles forming up the black tiger imagery in the end.

Assembly - event

zoomin - demo

2017 - year

1st - rank

Featuring a forever 3D zoomin effect with polished design and kicking baseline. Implemented for example with manipulations of vertex buffers at GPU.

Featuring a forever 3D zoomin effect with polished design and kicking baseline. Implemented for example with manipulations of vertex buffers at GPU.

This demo is made with love and care and is special as it was our first time to win the Assembly demo competition with it. \o/

Assembly - event

liquixion - the demo man - demo

2016 - year

2nd - rank

Liquidy particle blends, some fancy 3d zoomers and a novel screen space GI and well chosen textures combined to various shader effects tricks provide the fresh looks of this demonstration.

Liquidy particle blends, some fancy 3d zoomers and a novel screen space GI and well chosen textures combined to various shader effects tricks provide the fresh looks of this demonstration.

Assembly - event

reflexion - demo

2013 - year

2nd - rank

Liquids of the planet come alive, waves are propagating on 3D models surfaces and some surprising dives deeper into the 3D models are shown.

Liquids of the planet come alive, waves are propagating on 3D models surfaces and some surprising dives deeper into the 3D models are shown.

Breakpoint - event

concentrate - demo

2008 - year

8th out of 24 - rank

Our first demo with GPU shaders. Includes also realtime synchronization with visuals to the music FFT data. The demo tool created for this demo offered thumbnail strip of images when rewinding and possibility to insert comments into the timeline. Made with passion and love.

Our first demo with GPU shaders. Includes also realtime synchronization with visuals to the music FFT data. The demo tool created for this demo offered thumbnail strip of images when rewinding and possibility to insert comments into the timeline. Made with passion and love.

Stream - event

siemen - 4k

2008 - year

1st - rank

One of the worlds first small 4k sized demonstration with GPU raymarching of surface formulas. Also includes a completely custom coded CPU side music synthesizer - and all crunched into 4k executable with help of Crinkler compressing linker and heavy duty C, assembler and shader code size optimizations.

One of the worlds first small 4k sized demonstration with GPU raymarching of surface formulas. Also includes a completely custom coded CPU side music synthesizer - and all crunched into 4k executable with help of Crinkler compressing linker and heavy duty C, assembler and shader code size optimizations.

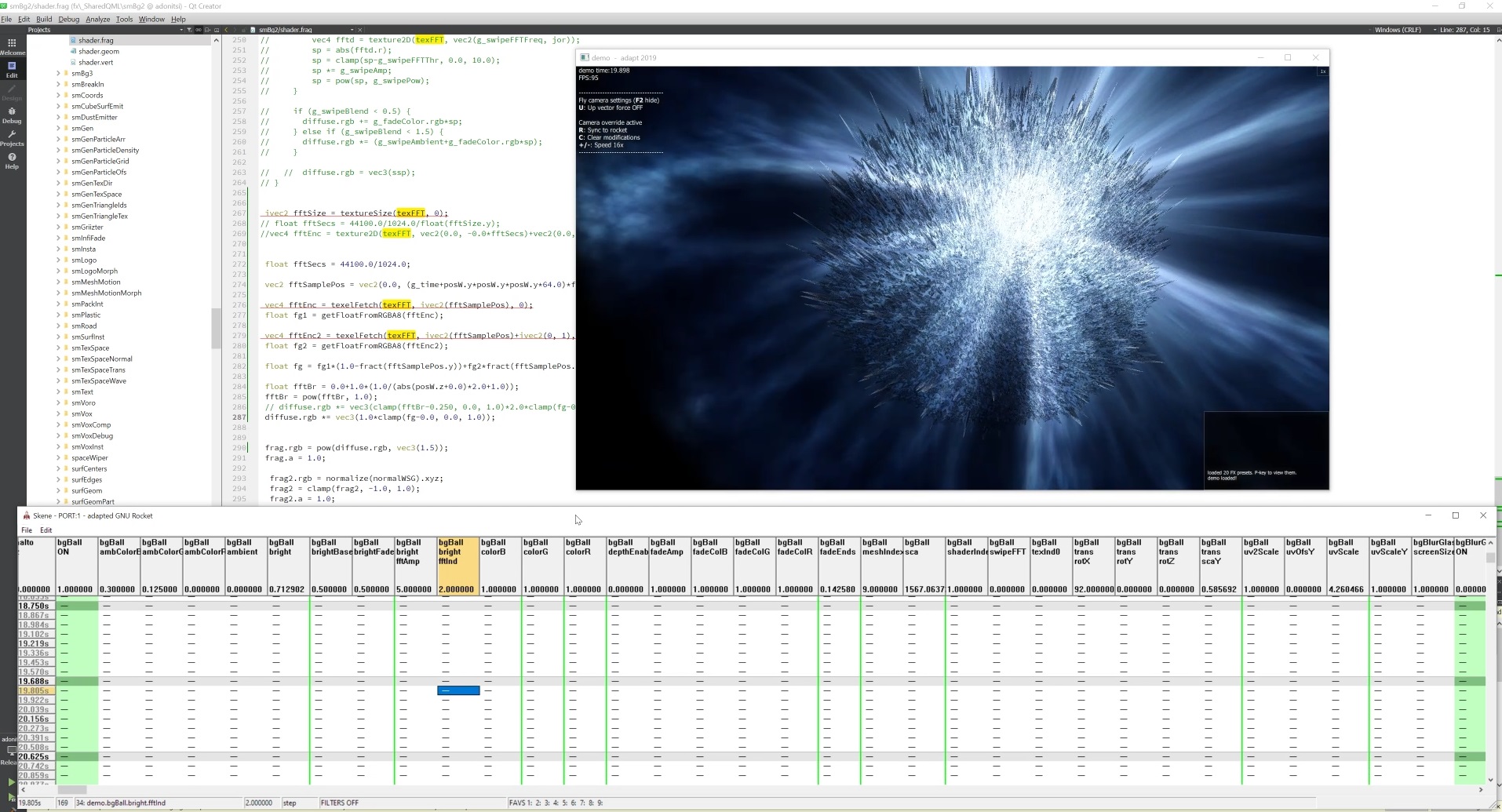

THE BASELINE WITH ROCKET

Our demos are made with love and care by using self made graphics engines and mostly self made tools.

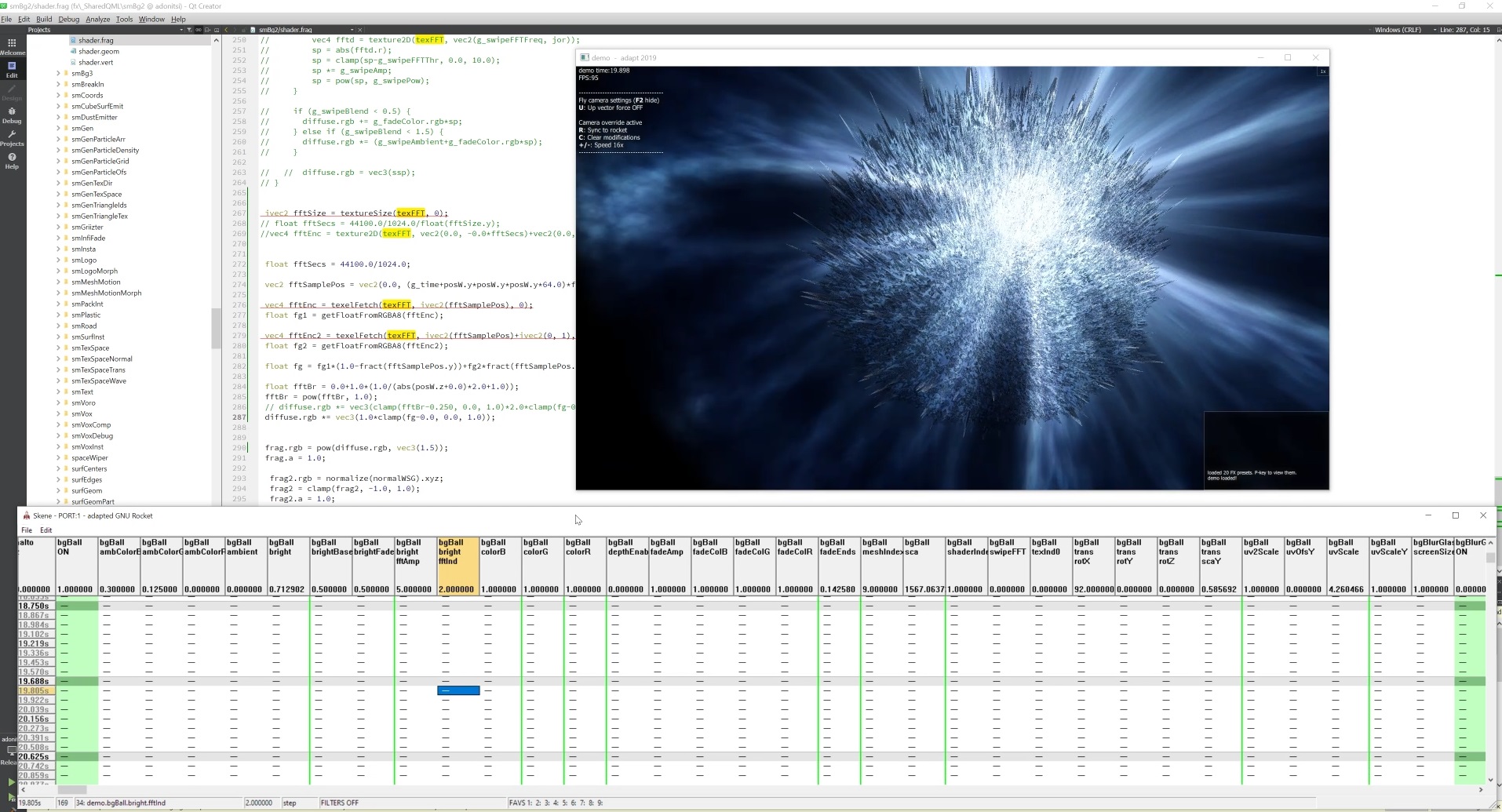

As mentioned in the overview, in some cases we have taken an existing tool like the quite popular GNU Rocket parameter tracker, and

extended these to enable smooth demo making workflow for us.

Here is an example image of how the Rocket parameter tracker (at the bottom of the image) looked like:

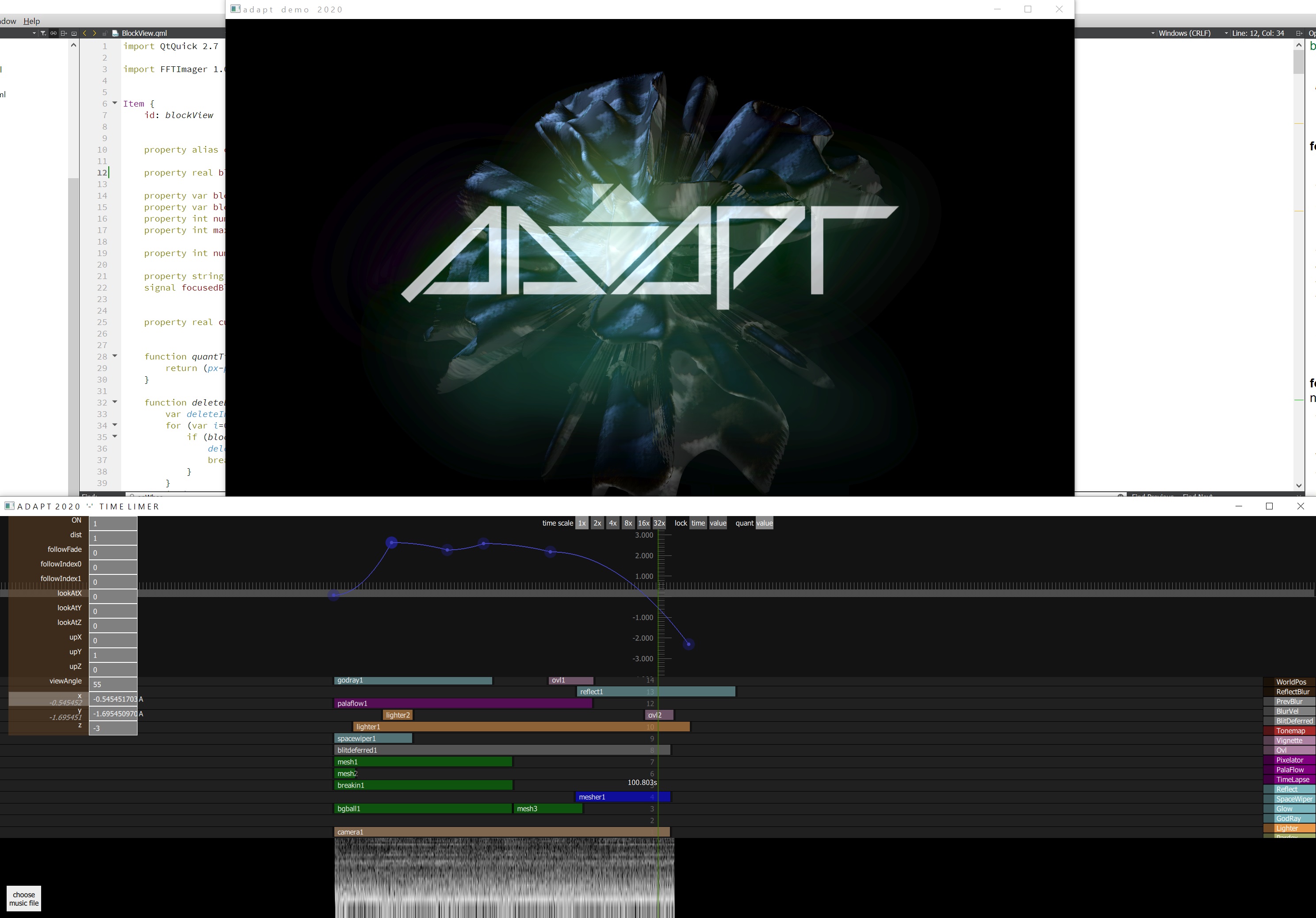

EVOLUTION TO CUSTOM TIME LINER TOOLING

The Adapted Rocket was something that we actively developed and used with our DirectX9 and OpenGL demo and

intro (intros are sized limited demos - where typical limits are 4kb or 64kb executable size limits) engines from

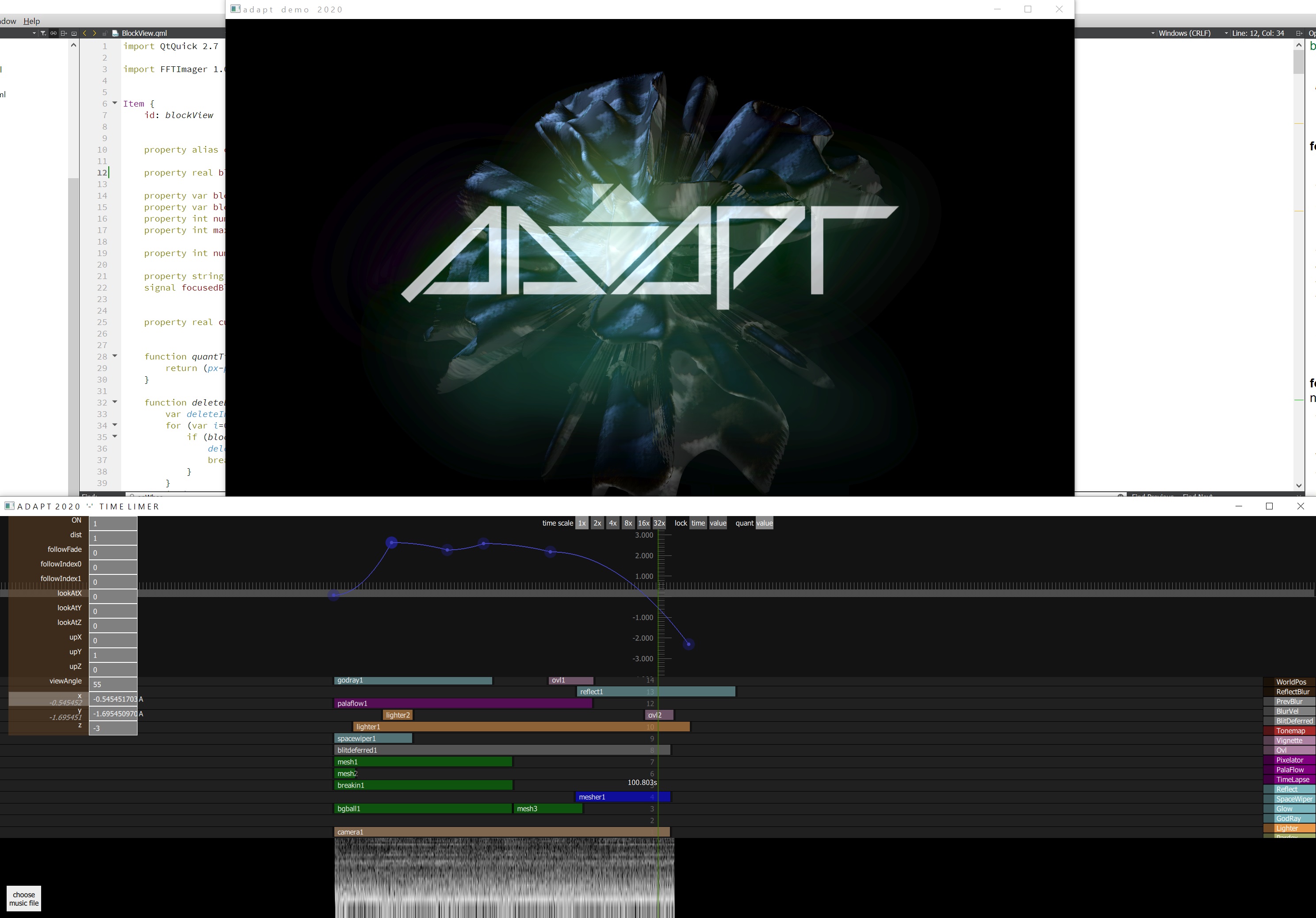

2011 to 2019 until in 2020 we pushed on developing a new Time Liner tool named as Time Limer. This tool as all of our recent

demo making tools are made by using the fabulous Qt QML declarative UI language.

Example of how the new Time liner parameter adjustment tool looks like:

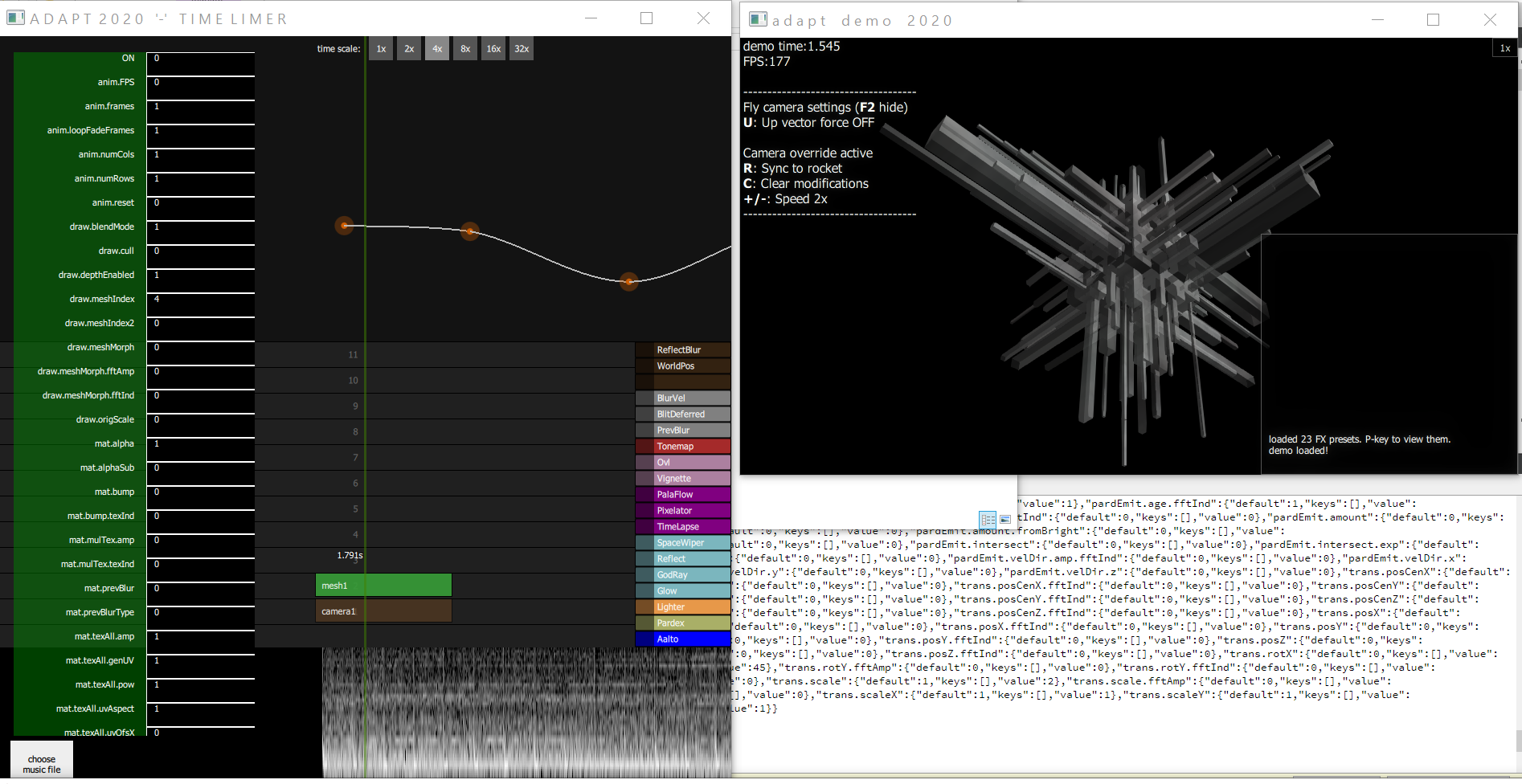

Another example of this time limer in use:

And here is a short video to show this tool in action (click below to play):

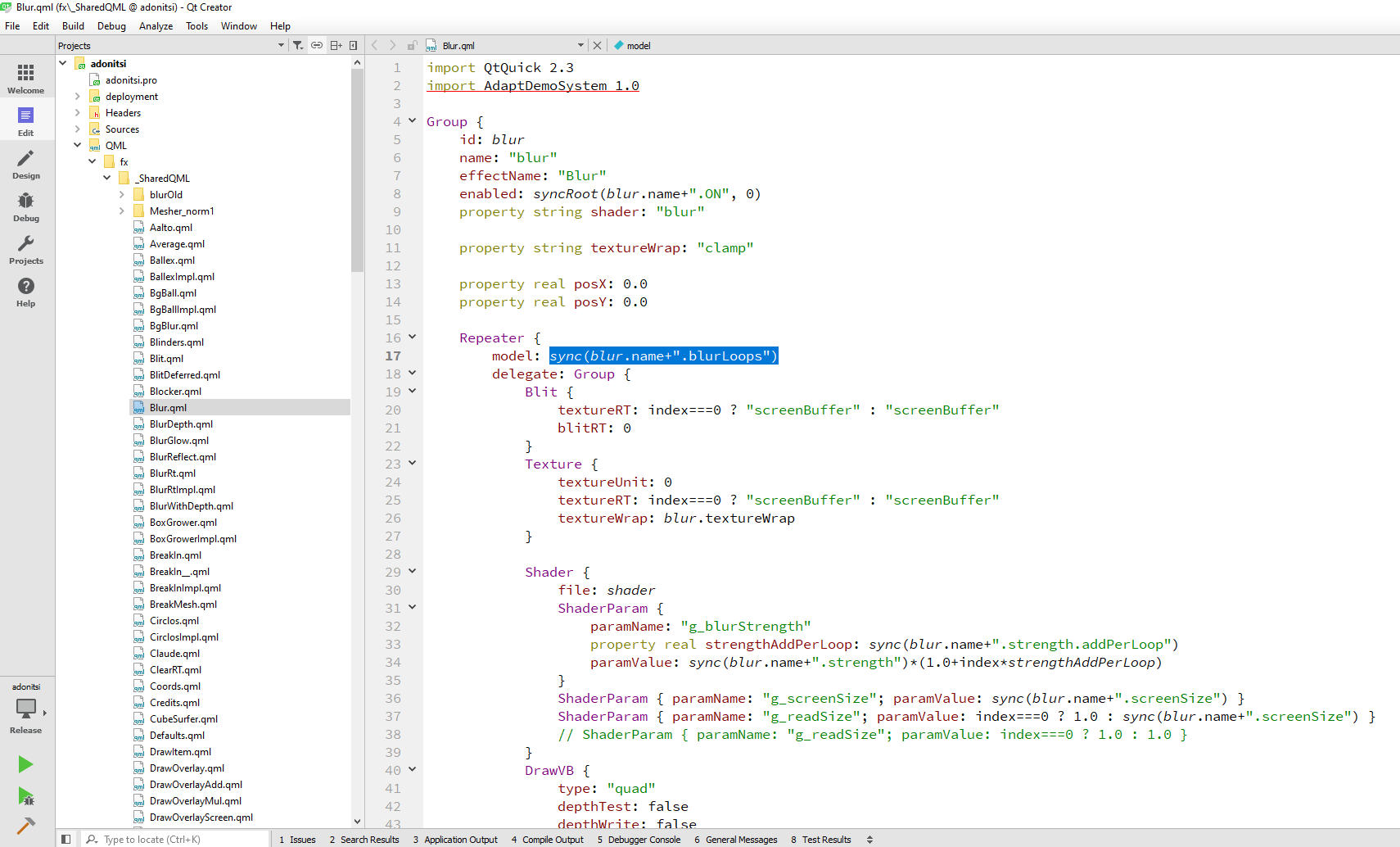

RENDERER, QML & PARAMETER BINDINGS

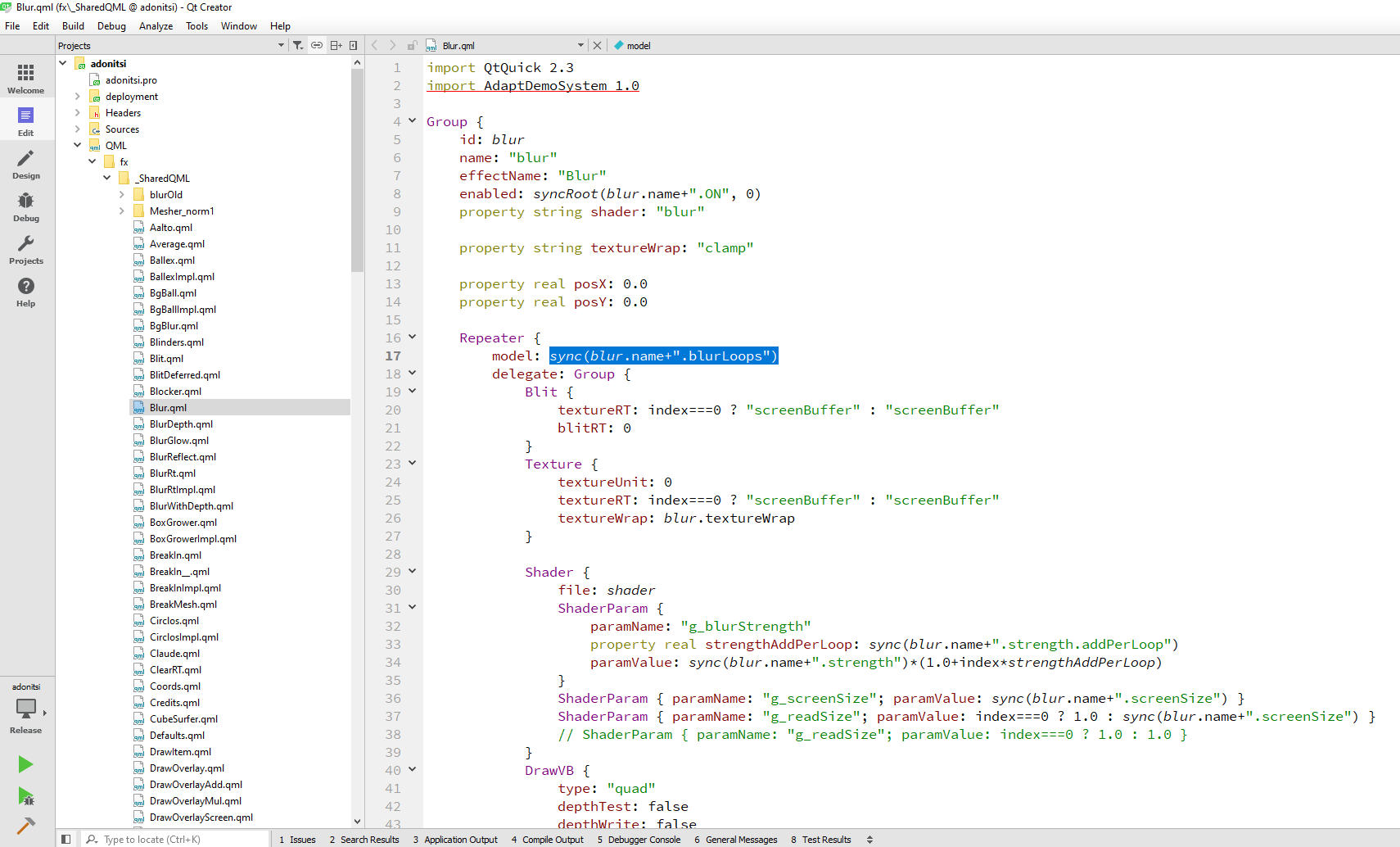

The baseline of the current adapt demo engine is a Qt executable written with C++ and exposing itself in the QML declarative as import module.

The core C++ code implements our custom OpenGL renderer with QML interface, enabling us

to write the effect blocks (=nodes of a scene graph) each as own QML module.

This allows binding any chosen parameters for example the GPU shader code parameters in a very convenient way to the connected

parameter adjustment tool (which was first the above explaine Rocket tool and

now our own Time Liner tool). These parameters of each effect can then be adjusted real time, providing a very intuitive and natural flow

for tuning the visual graphical experience provided by the demonstration into perfection.

Example of one effect block written with QML script and binding the parameter to the named ".blurLoops" into the QML script:

Similarly the QML script can be used to bind any other parameters to the QML items like Shader & ShaderParam (which can be seen on the bottom half of the above image),

these then propagating the adjusted parameters real-time to the shader code from the parameter adjustment tool.

FFT IMAGER

Among other tools we have also developed a tool named FFT Imager, enabling to make presets of how to map FFT spectrum data

taken from the music audio track of the demo into the parameters of the visual effects. These presets are then

easy to take into use for any parameter.

The FFT Imager tool is shown here on the left in action (click to play a short demo video of the tool in action):

PRESETS

The concept of presets is actually important everywhere when the workflow of a creative process is wished to be improved.

Be it finding good parameters of an effect block and making a preset out of it for later easy use, or

making a preset of groups of these effect blocks for easily copying and applying it to

be used (with possibly tweaked parameters) at some other point of the timeline.

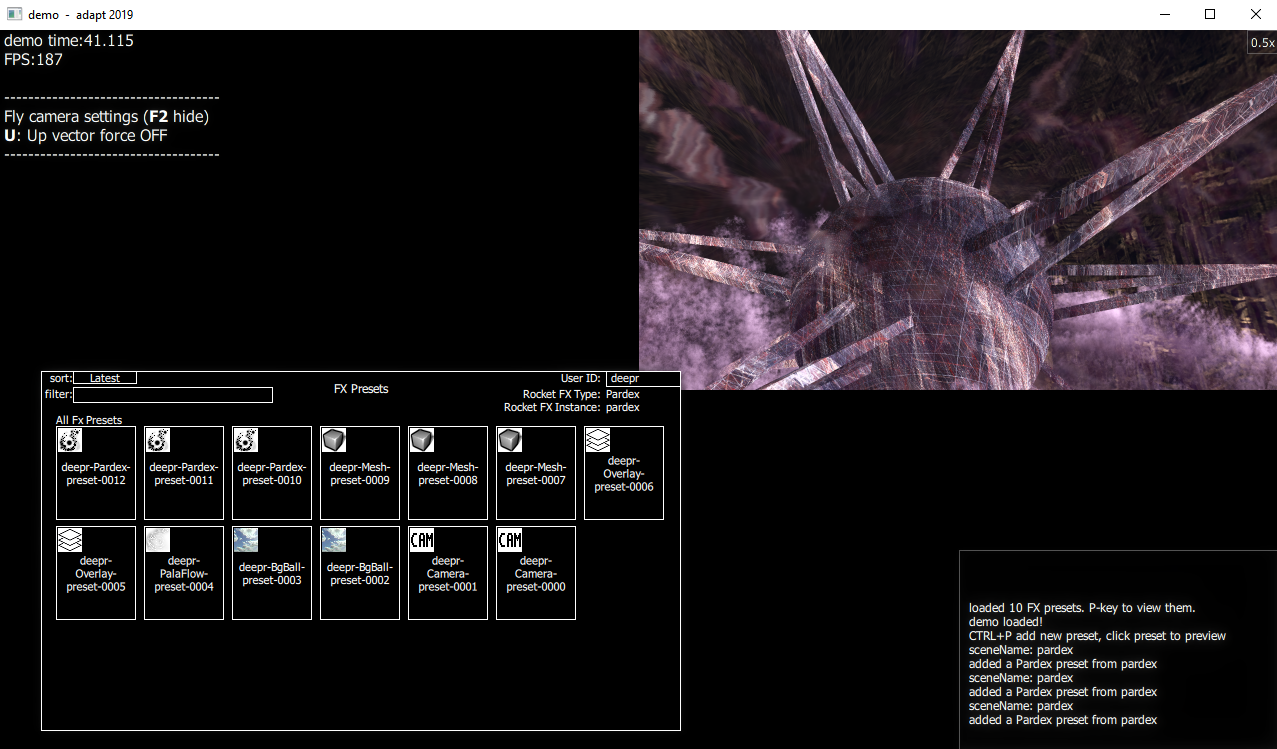

As example in our 2019 demo tooling we had a preset grid where effect block parameters could be saved quickly with

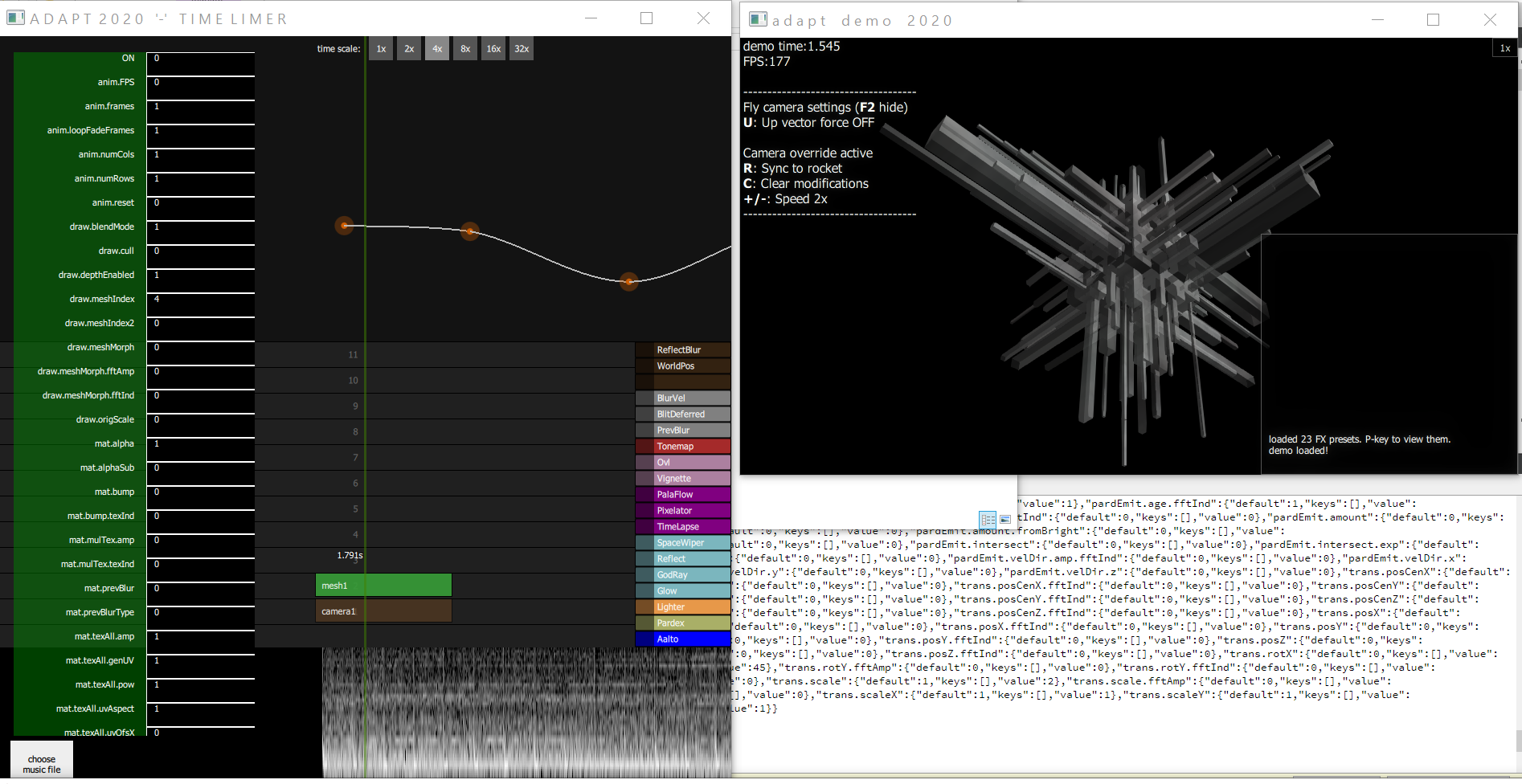

pressing the attached shortcut key-combo, and applied to the current moment of time with another shortcut.

This effect preset tool is shown in use here on the bottom left part of the screenshot:

FLY CAMERA

One more example of tooling feature enabling to make these kind of demos in a natural way is a "fly camera". This approach heavily benefits the demo making flow

by enabling to fly around in the 3D scene of the demo with combination of keyboard and mouse buttons. Then again with one button shortcut the current camera

parameters can be synchronized into the parameter tooling easily. And on the parameter tool side these recorded keypoints can be further tweaked

as neccessary for example through changing the interpolation type between the key points or just by tuning the recorded key values.

FINAL POINTS ABOUT THE IMPORTANCE OF THE REAL TIME EDITS

The real time editability and instant visual feedback when modifying something in the demo is the key part of a well functioning

and smooth demo making workflow. This enables to quickly make enjoyable visual experiences with a tight feedback loop.

That is why this real time editability is one of the fundamental drivers in our adapt demo tool developments.

In the end anything that you can change or adjust should reflect to changes instantly and not require a manual reload.

These changeable and adjustable aspects include for example the parameters of the effect blocks,

modifying the effect block node stack structures or even going to modify the shader codes.

Our mentality in the adapt systems development is that all of these are implemented with

the ability to auto-load and update any changes quickly without a notice in real-time.

This website (c) adapt 2006-2022 (updated by deepr on 2022-03-01)